For decades, mind-reading existed only in science fiction. Today, advances in artificial intelligence and neuroscience are bringing something remarkably close to reality.

Scientists are now developing AI systems that can interpret patterns of brain activity and reconstruct images, speech and even fragments of thought. While these systems do not literally “read minds” in the telepathic sense, they can translate neural signals into meaningful output with growing accuracy.

The implications are profound — from restoring communication for paralyzed patients to raising urgent questions about mental privacy.

How Can AI “Read” Thoughts?

The human brain communicates through electrical and chemical signals. When we see an image, imagine a scene or think about a word, specific neural patterns activate.

AI systems can be trained to:

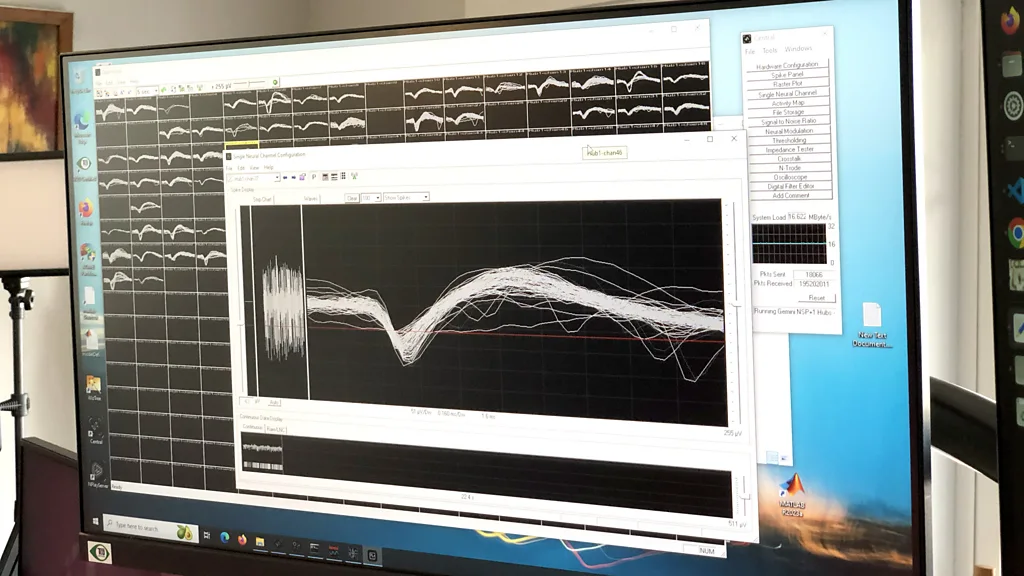

- Record brain activity using devices such as:

- Functional MRI (fMRI)

- Electroencephalography (EEG)

- Implanted electrodes

- Near-infrared spectroscopy

- Analyze patterns in this activity.

- Map those patterns to likely images, words or concepts using machine learning models.

In essence, the AI learns to associate brain signal patterns with corresponding outputs.

It does not hear an inner voice. It interprets measurable data.

Reconstructing Images from Brain Activity

One breakthrough involves reconstructing images a person is looking at — or imagining — by analyzing brain scans.

Researchers show participants pictures while recording their brain activity. The AI model then learns the relationship between visual cortex activity and image features.

Later, when the participant views a new image, the system can generate a blurry but recognizable reconstruction.

Recent advances using generative AI models have dramatically improved clarity. Instead of producing abstract shapes, systems now create outputs that resemble the original subject matter.

Decoding Speech and Language

Perhaps even more transformative is speech decoding.

For patients who cannot speak due to paralysis or neurological injury, AI-driven brain-computer interfaces (BCIs) offer hope.

In some experiments:

- Participants silently think of words or sentences.

- AI analyzes neural activity in language-processing areas.

- The system generates corresponding text output.

This technology could allow locked-in patients to communicate fluidly for the first time.

Unlike earlier systems requiring invasive implants, some new approaches use non-invasive brain imaging — though implants often provide higher precision.

Thought vs. Intention

It’s important to clarify a misconception:

AI cannot access private, unstructured thoughts without cooperation.

Current systems require:

- The participant’s consent

- Controlled experimental settings

- Training data from the same individual

Models must be personalized. Brain patterns vary significantly between individuals.

This is not passive surveillance technology — at least not yet.

Medical Applications

1. Restoring Communication

AI-based BCIs can help stroke victims or ALS patients express themselves.

2. Prosthetic Control

Brain signals can guide robotic limbs or cursors on a screen.

3. Mental Health Research

Decoding emotional states may aid understanding of depression, anxiety or PTSD.

4. Early Neurological Diagnosis

AI may detect subtle brain pattern changes associated with Alzheimer’s or epilepsy.

The medical potential is vast.

The Role of Generative AI

Modern generative models enhance decoding capabilities.

When AI attempts to reconstruct an image or sentence, generative systems fill in plausible details based on learned patterns.

For example:

- If brain activity suggests “dog,” a generative model produces a realistic dog image.

- If neural signals indicate “walking on beach,” AI generates a coherent scene.

Generative AI bridges gaps where brain signals are incomplete or noisy.

The Privacy Question

As capabilities improve, concerns about mental privacy intensify.

Key ethical questions include:

- Who owns brain data?

- Could employers or governments misuse neural decoding?

- Should there be “cognitive liberty” rights?

- How secure are brain-computer interfaces?

Unlike passwords or biometric identifiers, thoughts are deeply personal and continuously generated.

Neural data protection may become one of the defining ethical debates of the 21st century.

Technical Limitations

Despite dramatic headlines, significant barriers remain:

- Brain imaging equipment is bulky and expensive.

- Non-invasive methods have limited resolution.

- Decoding accuracy depends on prior training data.

- Results are probabilistic, not exact.

- Real-time decoding is computationally demanding.

We are still far from spontaneous thought extraction.

Military and Commercial Implications

Governments are investing in brain-interface research for potential defense applications.

Private companies are exploring consumer brain-interface devices for gaming, accessibility and productivity enhancement.

The convergence of AI, neuroscience and wearable technology could accelerate innovation — and risk.

The Future of Brain-AI Integration

Possible future developments include:

- Lightweight wearable brain sensors

- Faster neural decoding algorithms

- Direct brain-to-text messaging

- Augmented cognition systems

- Memory enhancement interfaces

The line between assistive technology and cognitive augmentation may blur.

Frequently Asked Questions (FAQ)

Q: Can AI literally read my thoughts?

No. Current systems require cooperation, specialized equipment and training data.

Q: How accurate are these systems?

Accuracy varies. Some speech-decoding systems achieve high accuracy in controlled settings, but real-world reliability remains limited.

Q: Are brain implants required?

Not always. Non-invasive methods exist, but implants provide more precise signals.

Q: Is this technology safe?

Invasive implants carry medical risks. Non-invasive methods are generally safer but less precise.

Q: Could governments misuse this technology?

Theoretically, yes — which is why ethical and legal safeguards are critical.

Q: Will this become common consumer technology?

It’s possible, but widespread adoption would require significant technological miniaturization and regulatory approval.

Q: What is cognitive liberty?

The right to mental privacy and control over one’s own brain data.

Conclusion

AI is not reading minds in the mystical sense — but it is learning to decode patterns of neural activity with increasing sophistication.

The technology holds extraordinary promise for medicine and communication. Yet it also forces society to confront a new frontier of privacy and autonomy.

For the first time in history, thoughts — once entirely private — are becoming measurable data.

How we govern that power may shape the future of human freedom itself.

Sources BBC