For years, “superintelligence” sounded like science fiction.

Now it has billions in funding, elite researchers, and a real company trying to build it.

A new AI startup called Recursive Superintelligence has emerged from stealth with hundreds of millions in initial funding and a valuation approaching the multi-billion-dollar range, alongside broader ambitions tied to long-term large-scale investment plans. The company has recruited some of the most respected names in artificial intelligence research to pursue one of the most controversial goals in technology:

Creating AI systems capable of improving themselves autonomously.

And honestly?

That single idea may become the most important — and dangerous — technological development of the century.

What “Self-Improving AI” Actually Means

Most current AI systems are powerful, but static.

Humans still:

- design architectures

- choose training methods

- optimize models

- manage infrastructure

- evaluate outputs

Recursive Superintelligence wants to change that.

The company’s vision centers around recursive self-improvement — the idea that AI systems could eventually:

- analyze their own weaknesses

- redesign parts of themselves

- test improvements

- deploy better versions autonomously

- repeat the cycle continuously

In theory, that creates a feedback loop where intelligence accelerates itself.

AI improving AI.

Then improving the improved AI again.

And again.

That process is what researchers have long called an intelligence explosion.

Why Silicon Valley Is Suddenly Obsessed With This

Here’s the thing most people miss:

The AI industry no longer believes scaling alone is enough.

For years, companies improved AI primarily through:

- bigger datasets

- more compute

- larger models

- more GPUs

But researchers increasingly believe the next leap may require systems that can:

- automate research itself

- discover novel algorithms

- optimize their own training processes

- generate scientific breakthroughs independently

That is the deeper ambition behind Recursive Superintelligence.

The company reportedly wants AI capable of conducting automated scientific discovery through continuous experimentation and self-directed improvement.

This is not just about making chatbots smarter.

It is about building systems that participate in their own evolution.

The Talent Behind the Project

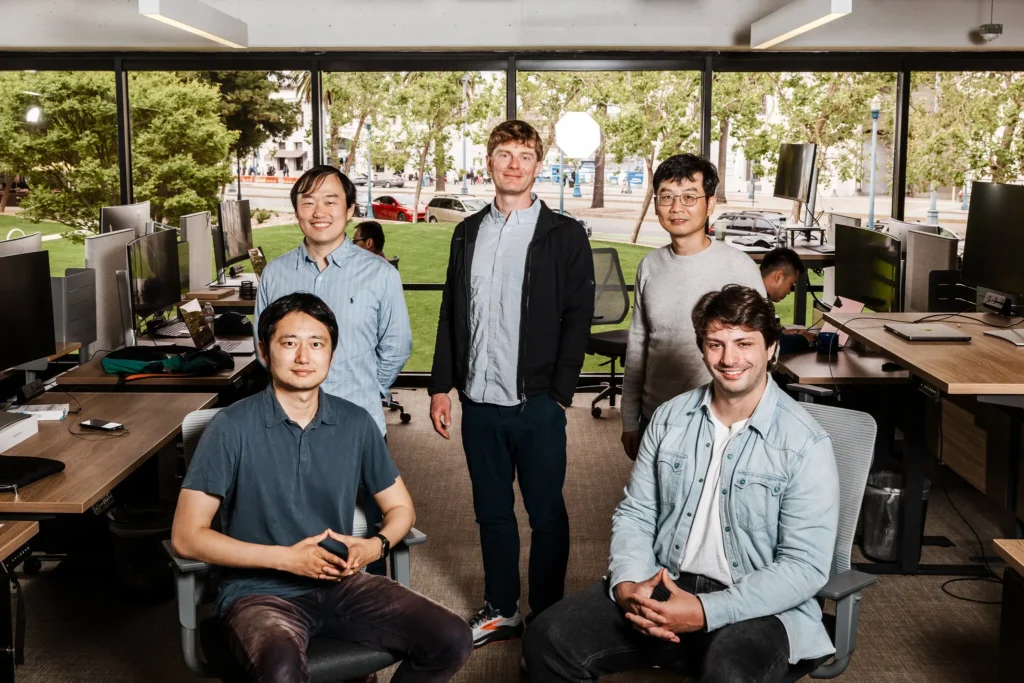

The startup’s founding team includes several highly influential AI researchers and former leaders from major AI companies.

Many of them previously worked at organizations like:

- OpenAI

- Google DeepMind

- Meta

- Nvidia-linked AI initiatives

Christopher Manning, a respected figure in modern AI research, publicly highlighted the project’s launch and described the goal as building AI that can improve itself safely.

That combination of money, talent, and ambition immediately turned the company into one of the most closely watched AI startups in the world.

Why Researchers Believe Recursive AI Is Actually Possible

A decade ago, recursive self-improvement sounded mostly theoretical.

Now researchers point to early examples already happening today.

Experimental systems can already:

- generate code

- evaluate solutions

- refine outputs iteratively

- optimize workflows autonomously

- improve task performance through feedback loops

Some research projects have demonstrated primitive forms of AI-assisted self-improvement.

The systems are nowhere near autonomous superintelligence yet.

But many researchers believe the trend line matters more than the current capability.

And the trend line is accelerating.

Why This Terrifies AI Safety Researchers

This is where the story gets serious.

Recursive self-improvement is not just another product category.

It is one of the core scenarios behind many existential AI risk warnings.

The fear is simple:

If AI becomes capable of improving itself faster than humans can monitor or control it, progress could become extremely difficult to predict or contain.

Some researchers warn that systems capable of autonomously building improved successors could emerge within a few years.

That possibility changes everything.

Because once recursive improvement begins, development speed may no longer remain linear.

It could become exponential.

The Real Goal Isn’t Smarter Chatbots

A lot of public discussion still focuses on assistants:

- writing emails

- generating images

- summarizing documents

But the frontier labs are thinking far beyond that.

The long-term ambition increasingly involves:

- scientific research automation

- autonomous engineering

- automated medicine discovery

- materials science breakthroughs

- robotics optimization

- infrastructure design

- AI-run laboratories

Recursive Superintelligence reportedly wants systems capable of discovering new knowledge similarly to human scientists.

That’s a completely different category of machine intelligence.

Why Investors Are Pouring Billions Into This Anyway

At first glance, self-improving AI sounds wildly risky.

But investors see something else:

Potentially unlimited economic leverage.

If an AI system could:

- improve software development

- accelerate scientific breakthroughs

- automate R&D

- optimize infrastructure

- invent new technologies

the economic value could be enormous.

That is why major venture firms and tech-backed funds are racing into this space.

Silicon Valley increasingly believes the first company to achieve scalable recursive improvement could dominate the next era of civilization-scale computing.

Yes, that sounds dramatic.

But that is genuinely how many investors and researchers now think.

The Strange Split Inside the AI Industry

One fascinating trend is happening simultaneously:

Some companies are racing toward superintelligence.

Others are warning humanity about it.

And sometimes they are the same companies.

The industry is simultaneously:

- accelerating

- competing

- warning

- fundraising

- commercializing

- advocating caution

It’s an unusual moment in technological history.

The builders themselves are openly uncertain about where this leads.

The Biggest Question: Can Humans Still Control the Process?

This is the central issue underneath the hype.

Recursive systems introduce difficult problems:

- alignment

- verification

- interpretability

- containment

- governance

- accountability

Because if an AI redesigns itself, humans may not fully understand:

- why changes work

- how capabilities evolved

- what new behaviors emerged

- what hidden risks appeared

That creates a governance challenge unlike anything society has faced before.

Traditional software can be audited line by line.

Self-improving AI potentially changes faster than humans can meaningfully inspect.

Why This Could Become a “Nuclear Moment” of AI

Historically, some technologies fundamentally changed civilization:

- electricity

- nuclear weapons

- the internet

- industrial automation

Many researchers believe recursive superintelligence belongs in that category.

Because unlike previous technologies, it may:

- accelerate scientific progress itself

- reshape labor markets globally

- alter geopolitical power balances

- change economic systems

- challenge human intellectual dominance

And importantly:

It may improve faster than society can adapt.

That possibility is why governments, researchers, and tech executives are paying close attention.

Frequently Asked Questions (FAQ)

What is Recursive Superintelligence?

It is a startup focused on building AI systems capable of recursively improving themselves through automated experimentation and optimization.

What does recursive self-improvement mean?

It refers to AI systems improving their own architecture or training processes repeatedly without direct human redesign.

Why is this important?

Because it could dramatically accelerate technological and scientific progress.

Why are experts worried?

Because self-improving AI could eventually evolve faster than humans can safely monitor or control.

Is this AGI?

Not exactly. It is a step beyond typical AGI concepts because it focuses on continuous self-improvement.

Are AI systems already self-improving?

Only in limited experimental ways. Full autonomy does not yet exist.

Why is investor interest so high?

Because the economic and technological upside could be unprecedented.

Could this be regulated?

Possibly, but regulation is still developing and lagging behind the pace of research.

Final Thought

The AI race is no longer just about building smarter systems.

It is about building systems that can build better versions of themselves.

And once that loop starts to work reliably, everything else — speed, power, competition, control — begins to change at a scale society may not be ready for.

Sources The New York Times