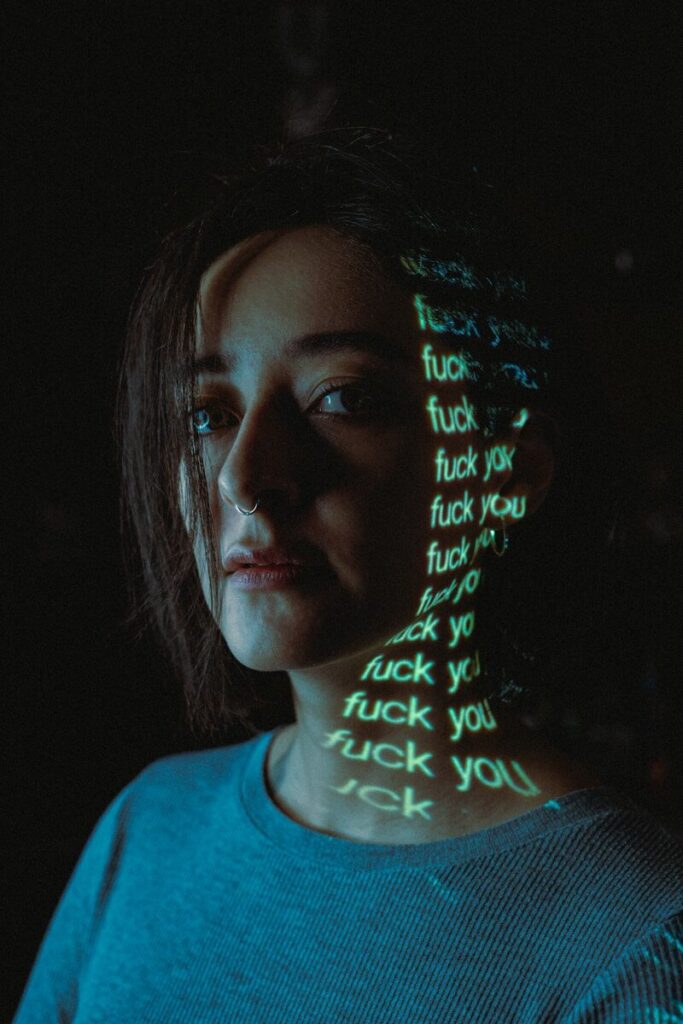

In recent years, technological innovation has promised a future of greater opportunity, connection and empowerment. But for millions of women and girls around the world, technology has become a source of harassment, discrimination and harm — rather than liberation. From abuse amplified by artificial intelligence to systemic gender bias built into digital systems, technology today not only reflects society’s inequalities but intensifies them.

AI Abuse: Deepfakes, Non-Consensual Images and Misuse

One of the most alarming manifestations of tech enabling harm is the explosion of non-consensual, AI-generated content — particularly intimate images. AI tools have been used to generate and post millions of abusive, sexually explicit images of women without their consent. Users manipulate images to add violent elements, and deepfakes depicting sexual abuse circulate widely online.

Applications that digitally remove clothing from uploaded photos have proliferated across app stores, generating massive downloads and significant revenue for developers. Platform operators often profit from these tools as well.

The human consequences are severe: young women dropping out of school, victims developing post-traumatic stress disorder, women losing jobs, being subjected to threats of honor-based violence, and children being coerced into sexual acts under blackmail.

Technology and Real-World Harm

Online abuse does not stay online. Research shows that a significant number of women journalists, activists and public figures who experience digital harassment also face real-world threats — including stalking, physical assault, harassment at home and coordinated intimidation campaigns.

Domestic abuse organizations report a rise in tech-enabled coercive control. Smart home devices, location tracking, spyware, wearable tech and AI tools are increasingly used to monitor, threaten and control women. In abusive relationships, technology can become another tool of surveillance and psychological manipulation.

Bias Built Into Systems: AI, Employment and Decision Making

Technology is not only misused by individuals — it can embed structural bias into automated systems. Artificial intelligence models are trained on historical data. If that data reflects inequality, the AI may reproduce and amplify it.

AI systems used in hiring, credit scoring, healthcare and policing have been found to show patterns of gender bias. Women may be screened out of job opportunities, offered fewer financial products, or misdiagnosed in medical contexts due to flawed datasets and biased assumptions.

Image generation models and recommendation algorithms also reinforce stereotypes, underrepresenting women in leadership or technical roles while overrepresenting them in traditional or domestic settings.

Underrepresentation in Tech Creation

A core issue lies in who builds the technology. Women remain underrepresented in AI research, engineering leadership and venture capital funding. Female founders receive a disproportionately small share of investment capital, limiting their ability to shape the digital landscape.

When development teams lack diversity, blind spots increase. Products may launch without considering safety implications for women, and harms are often addressed only after public backlash.

Workplace Inequality in the Tech Industry

Within the tech workforce itself, women face persistent barriers:

- Underrepresentation in senior technical and executive roles

- Pay gaps and limited advancement opportunities

- Higher attrition rates due to hostile or exclusionary environments

- Reduced mentorship and sponsorship networks

Many women leave tech careers early, citing burnout, discrimination and lack of belonging. This further shrinks the pool of diverse voices influencing future innovation.

Regulation and Governance Challenges

Regulatory systems often lag behind technological innovation. While some governments are introducing laws to criminalize deepfakes and mandate quicker removal of non-consensual content, enforcement remains inconsistent.

Experts argue that reactive measures are not enough. Proactive regulation, algorithmic transparency, bias auditing, corporate accountability and stronger digital rights protections are necessary to prevent harm before it scales.

Frequently Asked Questions (FAQs)

1. Why does technology disproportionately harm women?

Technology reflects existing societal inequalities. When biased data, poor moderation systems and weak safeguards intersect, women are often disproportionately targeted and harmed.

2. What are deepfakes and why are they harmful?

Deepfakes are AI-generated synthetic media that can realistically manipulate someone’s appearance or voice. Non-consensual deepfakes can damage reputations, cause psychological trauma and create serious safety risks.

3. How does AI create gender bias?

AI learns from historical data. If past patterns show discrimination or inequality, the system can encode and repeat those patterns in automated decisions.

4. Is online harassment linked to offline violence?

Yes. Digital harassment frequently escalates into real-world threats, stalking or physical harm. Online abuse can embolden perpetrators and normalize hostility.

5. What role do tech companies play?

Platforms design the systems that enable content sharing and AI deployment. Their policies, safety measures and business incentives significantly influence whether harm is prevented or amplified.

6. What solutions can reduce tech-based harm against women?

Solutions include stronger regulation, better AI auditing, platform accountability, improved reporting tools, investment in women-led innovation and increasing representation in tech leadership.

7. Why does representation in tech matter?

Diverse teams are more likely to anticipate harms, build inclusive products and prioritize safety. Representation influences design choices and corporate culture.

Sources Financial Times