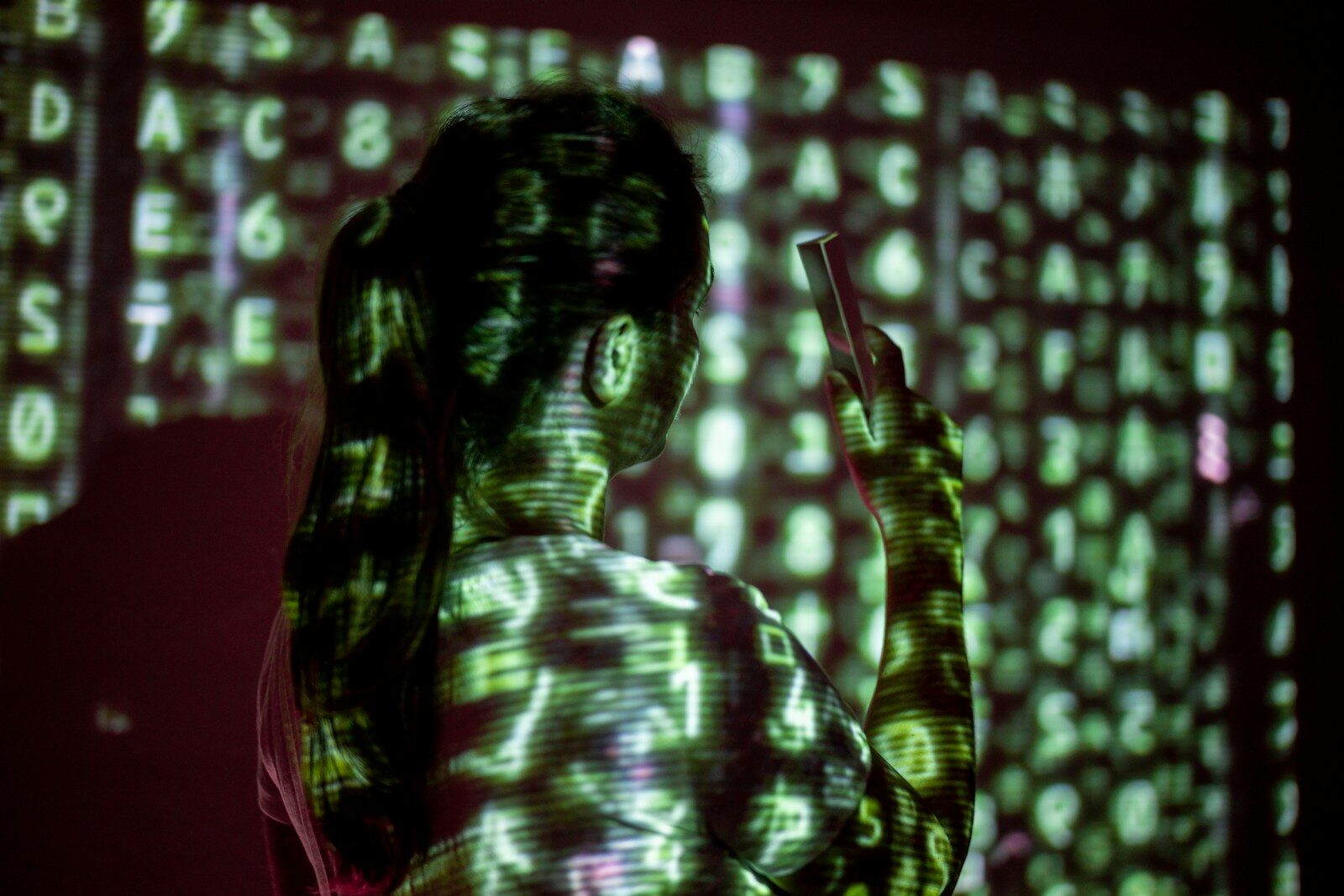

When Warnings Turn Into Influence

I’m sure you’ve seen them.

👉 Experts, influencers, and viral posts warning that AI could:

- Destroy jobs

- Manipulate society

- Even threaten humanity

These voices are often called:

👉 “AI doomers”

And they’re gaining attention fast.

But here’s the real question:

👉 Are they protecting us—or amplifying fear beyond reality?

🧠 Who Are “AI Doomers”?

AI doomers are:

- Researchers

- Tech insiders

- Influencers

- Public commentators

Who focus on:

👉 The risks and dangers of artificial intelligence

Their concerns include:

- Loss of control over AI systems

- Misuse by bad actors

- Long-term existential threats

⚠️ Why Their Message Is Spreading

1. Fear Travels Faster Than Facts

Content that:

- Warns

- Alarms

- Predicts danger

👉 Spreads more quickly online.

AI doom narratives:

👉 Capture attention instantly.

2. Real Risks Do Exist

Let’s be clear:

AI isn’t risk-free.

Concerns about:

- Bias

- Misinformation

- Security

👉 Are valid.

3. Complexity Creates Anxiety

Most people:

- Don’t fully understand AI

👉 This uncertainty leads to:

- Fear

- Speculation

- Worst-case thinking

4. Tech Leaders Are Also Warning

When insiders say:

👉 “We should be careful”

It adds:

- Credibility

- Urgency

🔍 What the Original Article Didn’t Fully Explore

Let’s go deeper into the dynamics behind AI doom culture:

1. The “Attention Economy” Incentive

Influencers gain:

- Views

- Followers

- Engagement

👉 Extreme predictions often perform better than balanced ones.

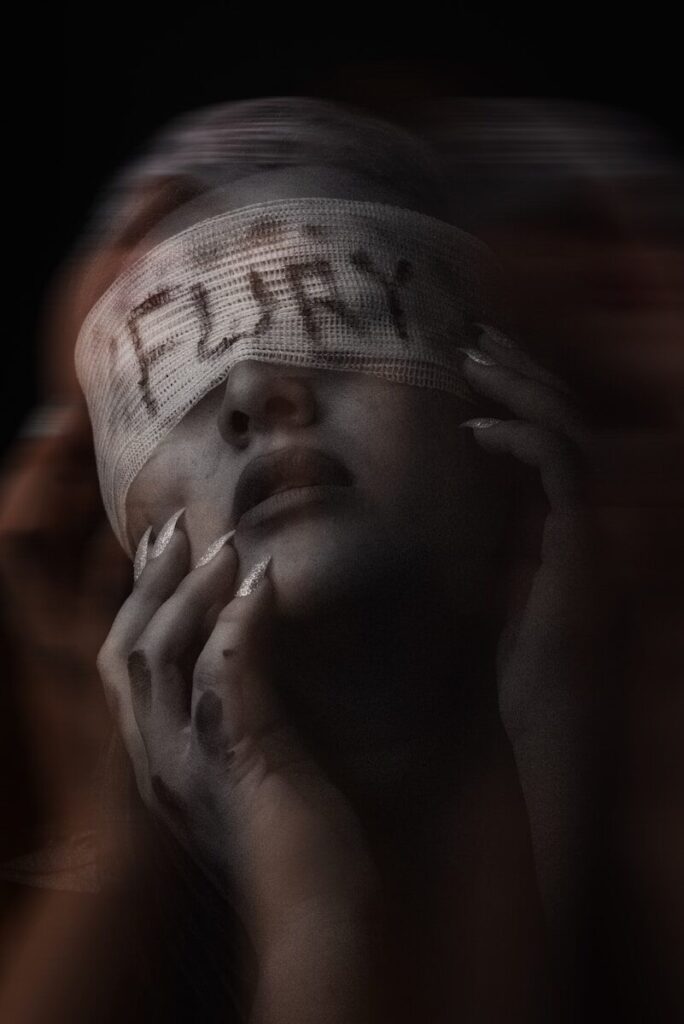

2. Fear as a Form of Control

Some narratives may:

- Push for stricter regulation

- Shape public opinion

- Influence policy

👉 Fear can drive decisions faster than facts.

3. The Blurring Line Between Experts and Influencers

Not all voices are equal.

Some:

- Are credible researchers

Others:

- Amplify ideas without deep expertise

👉 Audiences struggle to tell the difference.

4. Psychological Impact on Society

Constant exposure to AI fear can cause:

- Anxiety

- Distrust

- Resistance to innovation

👉 This affects how people engage with technology.

5. The Risk of Ignoring Real Benefits

Focusing only on risks can:

- Overshadow positive use cases

- Slow adoption of helpful tools

⚖️ The Balance: Risk vs Reality

AI has:

✅ Real Benefits

- Medical breakthroughs

- Productivity gains

- Improved services

⚠️ Real Risks

- Misuse

- Bias

- Security vulnerabilities

👉 The challenge is:

Balancing awareness without panic.

🧩 Why This Debate Matters

Public perception influences:

- Government regulation

- Corporate decisions

- Technology adoption

👉 If fear dominates:

- Innovation slows

- Policies become restrictive

👉 If risks are ignored:

- Harm increases

🛠️ How to Navigate AI Fear

✅ 1. Verify Sources

Check:

- Who is speaking

- Their expertise

✅ 2. Avoid Extreme Narratives

Be cautious of:

- “AI will destroy everything”

- “AI is perfectly safe”

👉 Truth is usually in between.

✅ 3. Focus on Evidence

Look for:

- Data

- Research

- Real-world examples

✅ 4. Stay Informed, Not Alarmed

Awareness is good.

👉 Panic is not.

🔮 The Future: More Voices, More Debate

As AI evolves:

👉 The conversation will grow louder.

Expect:

- More warnings

- More optimism

- More disagreement

👉 This is normal for transformative technology.

❓ Frequently Asked Questions

1. What is an AI doomer?

Someone who emphasizes the risks and potential dangers of AI, often highlighting worst-case scenarios.

2. Are their concerns valid?

Some are.

👉 AI does pose real risks—but not all predictions are realistic.

3. Why are these voices so popular?

Because:

- Fear spreads quickly

- Uncertainty increases engagement

4. Should we be worried about AI?

Yes—but in a balanced way.

👉 Awareness without panic.

5. Can AI be controlled?

Efforts are ongoing:

- Regulation

- Safety research

- Ethical design

6. What’s the biggest risk of doom narratives?

👉 Creating unnecessary fear that slows progress.

🔥 Final Thought

AI is powerful.

It deserves attention.

It deserves caution.

But it doesn’t deserve blind fear.

Because in the end…

👉 The future of AI won’t be shaped by those who panic—

But by those who understand both its risks and its potential.

Sources The Washington Post