Artificial intelligence is evolving at breakneck speed. New models are released in months, capabilities leap forward with each update, and adoption spreads globally almost overnight. But there’s a growing problem beneath the surface:

Scientific research on AI’s impacts is struggling to keep up.

By the time peer-reviewed studies are published, the systems they analyze may already be outdated. Policymakers, educators, and businesses are making high-stakes decisions in an environment where rigorous, long-term evidence lags far behind technological deployment.

This article explores why AI development is outpacing research, what risks that imbalance creates, how academia and regulators are responding, and what it means for the future of responsible innovation.

The Speed Mismatch: Innovation vs. Investigation

AI companies operate on rapid release cycles:

- Model upgrades every few months

- Continuous feature rollouts

- Large-scale beta testing with real users

In contrast, academic research often requires:

- Funding approvals

- Institutional review board (IRB) clearance

- Longitudinal data collection

- Peer review and publication timelines

This process can take years.

By the time a study evaluates one AI system’s effects, a more advanced version may already be in widespread use.

Why This Gap Matters

1. Policy Decisions Without Evidence

Governments are drafting AI regulations while:

- Lacking long-term impact data

- Relying on early-stage research

- Responding to anecdotal cases

Regulation becomes reactive rather than proactive.

2. Educational Institutions Are Experimenting Blindly

Schools and universities are integrating AI tools into:

- Homework assignments

- Writing assistance

- Tutoring systems

But research on long-term learning outcomes remains limited.

Educators must make decisions before evidence stabilizes.

3. Workplace Transformation Without Labor Data

Companies are restructuring roles around AI productivity gains.

Yet comprehensive research on:

- Job displacement patterns

- Wage effects

- Career pathway compression

is still emerging.

Structural Reasons Research Lags Behind

Limited Access to Proprietary Models

Many frontier AI systems are controlled by private companies.

Researchers often lack:

- Full model access

- Training data transparency

- Internal performance metrics

Without access, independent study is constrained.

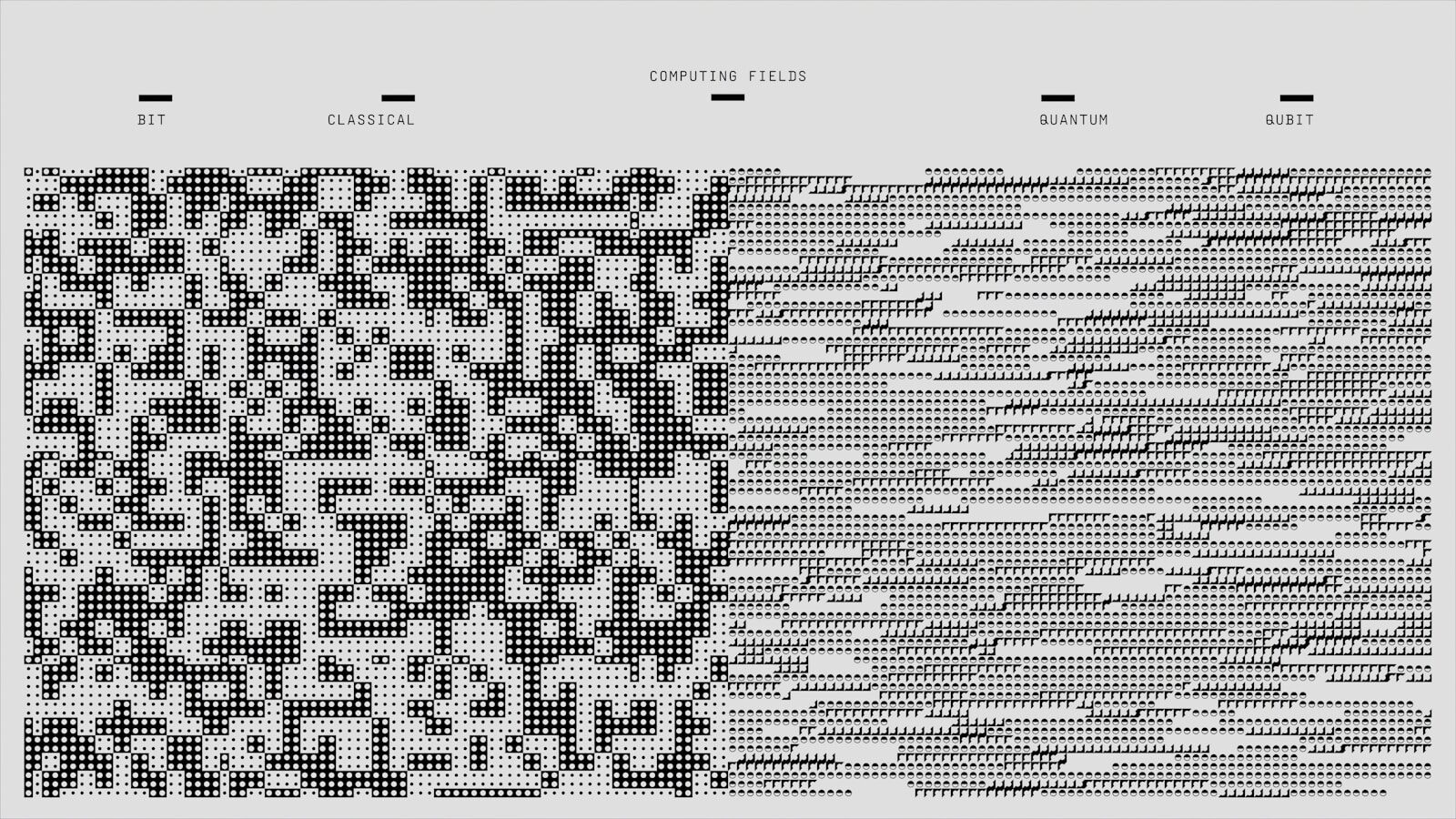

Rapid Model Evolution

AI capabilities improve with:

- Larger datasets

- Enhanced architectures

- Reinforcement learning updates

This moving target complicates longitudinal analysis.

Funding and Incentive Gaps

AI research funding often prioritizes:

- Technical innovation

- Model improvement

Social impact research may receive less investment, despite its growing importance.

What’s Often Overlooked

Real-World Testing Is the Default Experiment

Because formal research lags, society itself becomes the testing ground.

Millions of users generate behavioral data in real time, effectively conducting uncontrolled experiments.

Not All Research Is Academic

Private firms conduct internal safety testing and red-teaming.

However:

- Results are not always public

- Methodologies vary

- Independent verification may be limited

Transparency remains uneven.

Measurement Challenges Persist

AI impacts are difficult to quantify.

For example:

- How do we measure cognitive dependence on AI tools?

- How do we assess shifts in critical thinking?

- How do we isolate AI’s effect from broader digital trends?

Complexity slows clarity.

Emerging Responses

Faster Research Cycles

Some scholars advocate for:

- Preprint publishing

- Rapid review models

- Open data collaborations

These approaches aim to narrow the lag.

Regulatory Sandboxes

Governments are experimenting with:

- Controlled pilot programs

- Phased deployment requirements

- Impact assessment mandates

This blends innovation with oversight.

Academic–Industry Partnerships

Collaborations may:

- Provide researchers with controlled access

- Share safety findings

- Accelerate responsible innovation

However, maintaining independence is essential.

Risks of Falling Too Far Behind

If research cannot keep pace:

- Harmful effects may go undetected

- Overhyped benefits may go unchallenged

- Policy may swing between extremes

- Public trust may erode

Evidence-based governance depends on credible, timely research.

Frequently Asked Questions

Is AI research really behind development?

Yes. Technological iteration cycles are significantly faster than traditional academic research timelines.

Why can’t researchers study AI faster?

Peer review, funding processes, ethical approvals, and access limitations slow rigorous study.

Does this mean AI is unsafe?

Not inherently. But incomplete evidence increases uncertainty in risk assessment.

What can be done to close the gap?

Greater transparency, faster publishing models, shared datasets, and collaborative frameworks can help.

Should AI development slow down?

Some experts advocate for cautious pacing, while others argue innovation should continue alongside strengthened oversight.

Final Thoughts

AI’s acceleration is a technological triumph — but it has created an epistemic gap.

When systems evolve faster than our ability to study them, society risks operating without a clear map.

Innovation without understanding can produce both breakthroughs and blind spots.

The challenge ahead is not merely building smarter machines.

It is ensuring our capacity to evaluate, govern, and comprehend them grows just as quickly.

Sources Axios