In a striking development that is sending shockwaves through academia, researchers have demonstrated that an artificial intelligence system can generate a scientific paper capable of passing peer review. What was once unthinkable—that a machine could produce research convincing enough to satisfy expert reviewers—is now a reality.

This milestone is not just a technical achievement; it exposes both the growing power of AI and the vulnerabilities within the scientific publishing system. As AI tools become more sophisticated, they are beginning to challenge one of the foundations of modern science: the trust that peer-reviewed research reflects genuine human inquiry and expertise.

The implications are profound, raising urgent questions about credibility, authorship and the future of knowledge itself.

How AI Generated a Peer-Reviewed Paper

Recent experiments have shown that advanced AI systems—particularly large language models—can:

- generate full-length research papers

- structure arguments using standard academic formats

- include citations and references

- mimic scientific tone and methodology

In some cases, these papers were submitted to conferences or journals and successfully passed peer review, meaning human experts evaluated the work and deemed it acceptable for publication.

The AI did not “conduct research” in the traditional sense. Instead, it:

- synthesized existing knowledge

- generated plausible hypotheses

- constructed logical arguments

- formatted results in a convincing academic style

The result was a paper that appeared legitimate—even to trained reviewers.

Why Peer Review Didn’t Catch It

Peer review is designed to evaluate the quality, originality and validity of research.

However, the system has limitations that AI can exploit:

1. Time Constraints

Reviewers often work under tight deadlines and may not verify every detail.

2. Trust-Based System

Academic publishing relies heavily on trust that authors are presenting genuine work.

3. Increasing Volume of Submissions

Journals and conferences receive large numbers of papers, making thorough review difficult.

4. Plausibility Over Verification

Reviewers may assess whether a paper “sounds correct” rather than replicating results.

AI-generated papers can take advantage of these weaknesses by producing content that is highly plausible but not necessarily grounded in original research.

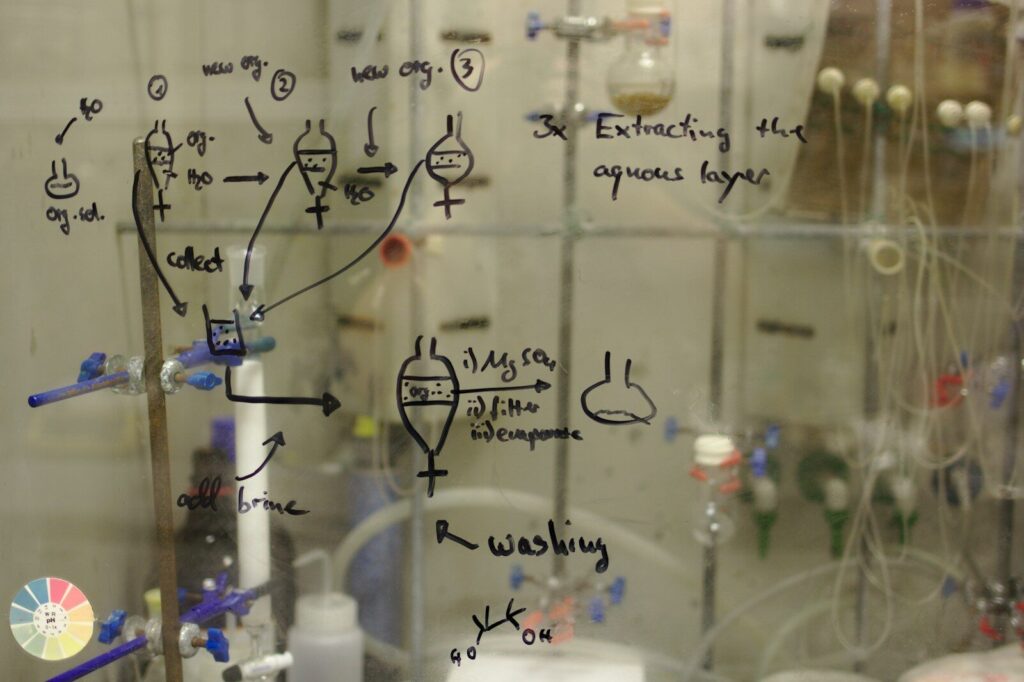

The Difference Between Writing and Doing Science

One of the key issues raised by this development is the distinction between:

- writing about science

- doing scientific research

AI is increasingly capable of the former.

It can:

- explain theories

- summarize literature

- generate hypotheses

But it does not:

- conduct experiments

- collect real-world data

- verify results through empirical testing

This creates a risk where papers may appear scientifically valid without being based on actual discoveries.

Risks to Scientific Integrity

The ability of AI to generate publishable research introduces several risks.

1. Fake or Low-Quality Research

AI could be used to produce large volumes of papers with little or no real contribution.

2. Erosion of Trust

If readers cannot distinguish between human and AI-generated work, trust in scientific literature may decline.

3. Academic Misconduct

Researchers may use AI tools to inflate publication records or bypass rigorous research processes.

4. Misinformation in Science

Incorrect or fabricated findings could spread more easily.

The Potential Benefits of AI in Research

Despite these concerns, AI also offers significant advantages for scientific work.

Faster Literature Reviews

AI can analyze thousands of papers quickly, helping researchers identify trends and gaps.

Improved Writing Assistance

AI can help scientists communicate ideas more clearly.

Hypothesis Generation

AI can suggest new research directions based on existing data.

Data Analysis

Machine learning can uncover patterns in large datasets that humans might miss.

When used responsibly, AI can enhance—not replace—scientific research.

How Journals and Institutions Are Responding

Academic institutions and publishers are beginning to adapt.

Possible responses include:

Disclosure Requirements

Authors may be required to disclose AI use in research and writing.

Improved Detection Tools

New systems are being developed to identify AI-generated content.

Stronger Review Standards

Journals may emphasize verification of data and methods rather than just written arguments.

Ethical Guidelines

Organizations are creating frameworks for responsible AI use in research.

However, these measures are still evolving.

The Future of Peer Review

The rise of AI may force a fundamental rethink of peer review.

Future systems could include:

- AI-assisted review tools to detect inconsistencies

- more emphasis on reproducibility of results

- open peer review processes for greater transparency

- verification of underlying data and code

The goal will be to ensure that scientific credibility remains intact in an era of machine-generated content.

The Bigger Question: What Is Authorship?

AI-generated research also challenges traditional notions of authorship.

If an AI writes a paper:

- Who is the author?

- Who is responsible for errors?

- Who owns the intellectual contribution?

These questions are not just academic—they have legal, ethical and professional implications.

Frequently Asked Questions (FAQ)

Q: Can AI really write scientific papers?

Yes. AI can generate structured, well-written papers that mimic academic style.

Q: Does AI conduct real scientific research?

No. AI synthesizes existing information but does not perform experiments or collect data.

Q: How did AI-generated papers pass peer review?

They were convincing enough in structure and language to meet reviewers’ expectations, despite lacking original research.

Q: Is this a threat to science?

It poses risks, particularly if used irresponsibly, but also offers opportunities to improve research processes.

Q: Can journals detect AI-generated papers?

Detection tools are improving, but identifying AI-generated content remains challenging.

Q: Should AI be banned in research?

Most experts believe AI should be used responsibly rather than banned entirely.

Q: What will change in scientific publishing?

Expect stricter guidelines, better verification methods and greater transparency in how research is produced.

Conclusion

The fact that an AI-generated paper can pass peer review marks a turning point for science.

It reveals both the extraordinary capabilities of modern AI and the vulnerabilities in systems designed for a different era.

The challenge now is not to stop progress, but to adapt. Scientific institutions must evolve to ensure that the integrity of research is preserved—even as the tools used to produce it become more powerful.

Because in the age of AI, the question is no longer whether machines can write science—but whether we can still trust what we read.

Sources Scientific American